At work, I have started the implementation of a new data import backend. The current one has worked well, but as the number of products in the tracdelight network grows into the millions, the time needed to update the product list for every advertiser grows and the MySQL DB can no longer keep pace with it.

The new data import should be able to import or update a million products much quicker than the current 2 hours needed, and it should be able to keep scaling. MongoDB seems like a good candidate because it should be much faster than SQL. The new import will also be able to be executed as distributed jobs.

The new product store backend being written in Python, what abstraction should we use on top of MongoDB?. Working directly with PyMongo is an option, but usually an Object Document Mapper (like SQLAlchemy or Django ORM) is preferable.

As it was my first time with MongoDB, I researched a bit, and MongoEngine looked best, as it has a similar syntax to Django and seems well supported. But will it be fast enough, or does it introduce too much overhead compared to bare PyMongo? Not being one that just assumes things, I went ahead and checked that out myself.

The system I’ve built reads rows from a CSV, validates fields (for example that a given field is a valid price), and saves them to an storage backend. It is very modular, as not only is that usually a good design decision, but it allows me to have pluggable storage backends:

- to_file backend. This just drops the validated row or product into a new file. We need this as a baseline to now the absolute maximum speed rows could be saved at.

- An SQL backend. This will serve as a comparison to a classical SQL system. I use the Django ORM here, and to not be unfair I enable the transactions.commit_on_success() decorator and use a sqlite in-memory DB.

- MongoEngine. The code is nearly the same as in the Django ORM case. You can find it here.

- PyMongo. This will directly use the MongoDB Python driver, using safe=True. The importing method looks like this.

- devnull. We all want to be web scale, so I went ahead and implemented a devnull backend.

The code is purposely a naive implementation, to test how fast each back end is without resorting to optimizations, hacks or tricks. There are probably ways of making it much faster. And even though the production code will be very different to this early experiment, it is not an evil, synthetic micro-benchmark: on the contrary, it is a real application!

Benchmark details

- Average of three runs

- Importing 2 small-medium sized product lists with 4000 and 8000 rows

- 100% updates (will be the most common case)

- Code flow:

- Set all existing entries to old=True

- Upsert all new entries and set old=False

- Delete all entries still marked old=True

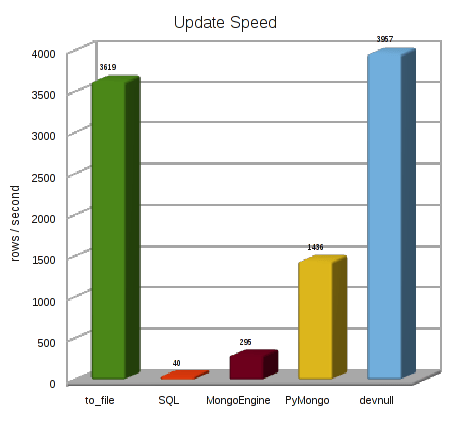

Results

Some take aways

- MongoEngine is 7x faster than SQL. This is actually eerily similar to Peter’s results!

- PyMongo 5x faster than MongoEngine

- PyMongo 36x faster than SQL

- Incredibly, the devnull backend is even faster than writing to a file!

- PyMongo 40% as fast as writing to a file

That last bit of data is nothing short of amazing. Since then, I have added a bit of an abstraction to the PyMongo storage engine, more MongoDB operations, per product/per field timestamps and more, and it is still every bit as fast.

This experiment has settled the matter: we will be using PyMongo directly (I am tempted to use the super-cool devnull backend, but I have heard that it is somewhat difficult to later retrieve data).

In the next post, I will be explaining how I’ll go about abstracting PyMongo a little bit, without taking away one ounce of its incredible speed.

I know I benchmarked MongoEngine to be faster than MongoKit but as far as I know MongoEngine doesn’t have one amazingly powerful feature: Direct access to pymongo!

With MongoKit you can quickly bypass the “ORM” part and go straight to the source. Instead of getting wrapped objects you get python python dicts. I recently ran a benchmark where I did a bunch of MyMongoKitModel.find() loops and got the objects out. It took 400 seconds (would probably have taken 400 minutes with SQL) and then with one single change of the loop I was able to go straight to the source and it took 17 seconds.

Here’s how it works:

def find_brother(self): me = self.db.Brother.one({'name':'Peter'}) me.searches += 1 me.save() count_candidates = 0 for other in self.db.Brother.collection.find({'name':{'$ne':'Peter'}}): if me.interests == other['interests']: count_candidates += 1Basically, for all O(1) operations use the MongoKit mapper to get a proper instance (with all your methods available) and for all O(n) type operations use the raw mode. THAT will give you exactly what you need. The uber speed where it matters and the practicality of the object mapper.

That is very cool. I didn’t know that MongoKit could directly call PyMongo.

But I am not sure that it is worth it to use an Object Mapper if you are going to use PyMongo for a lot of things. In fact the code you posted is not very different from my own custom abstraction.

I’ll investigate the matter in my next post 😉

Why didn’t you use MongoKit for your benchmark? I’m just curious.

Also, isn’t it possible to use pymongo directly with MongoEngine?

Thanks for your post,

Peter’s results already had shown that MongoKit was a tiny bit slower than MongoEngine, so I didn’t feel the need to test it as well. Choosing one over the other is a question of feature or preferences.

But of course I didn’t know that MongoKit allowed using pymongo directly. As far as I know that is not currently possible with MongoEngine.

You have access to pymongo directly through mongoengine as well. I haven’t used it, but have seen many people post that they have.

MonoEngine added Raw queries in version 0.4, but do you have full access to PyMongo for writes? Maybe I need to check it out again…

Still, for the kind of fine-grained control I require an OMM is not a good choice.

What it would be interesting is to benchmark MongoKit and MongoEngine when they use pymongo directly…

if /dev/null is fast and web scale i will use it